Secure and Intelligent Code Reviews for GitLab

Dec 29, 2025. 10 min

Modern engineering organizations are no longer constrained by velocity alone. At scale, the real challenge is sustaining quality, security, and maintainability while shipping quickly. The most successful engineering organizations solve this by encoding expectations into policy, not tribal knowledge.

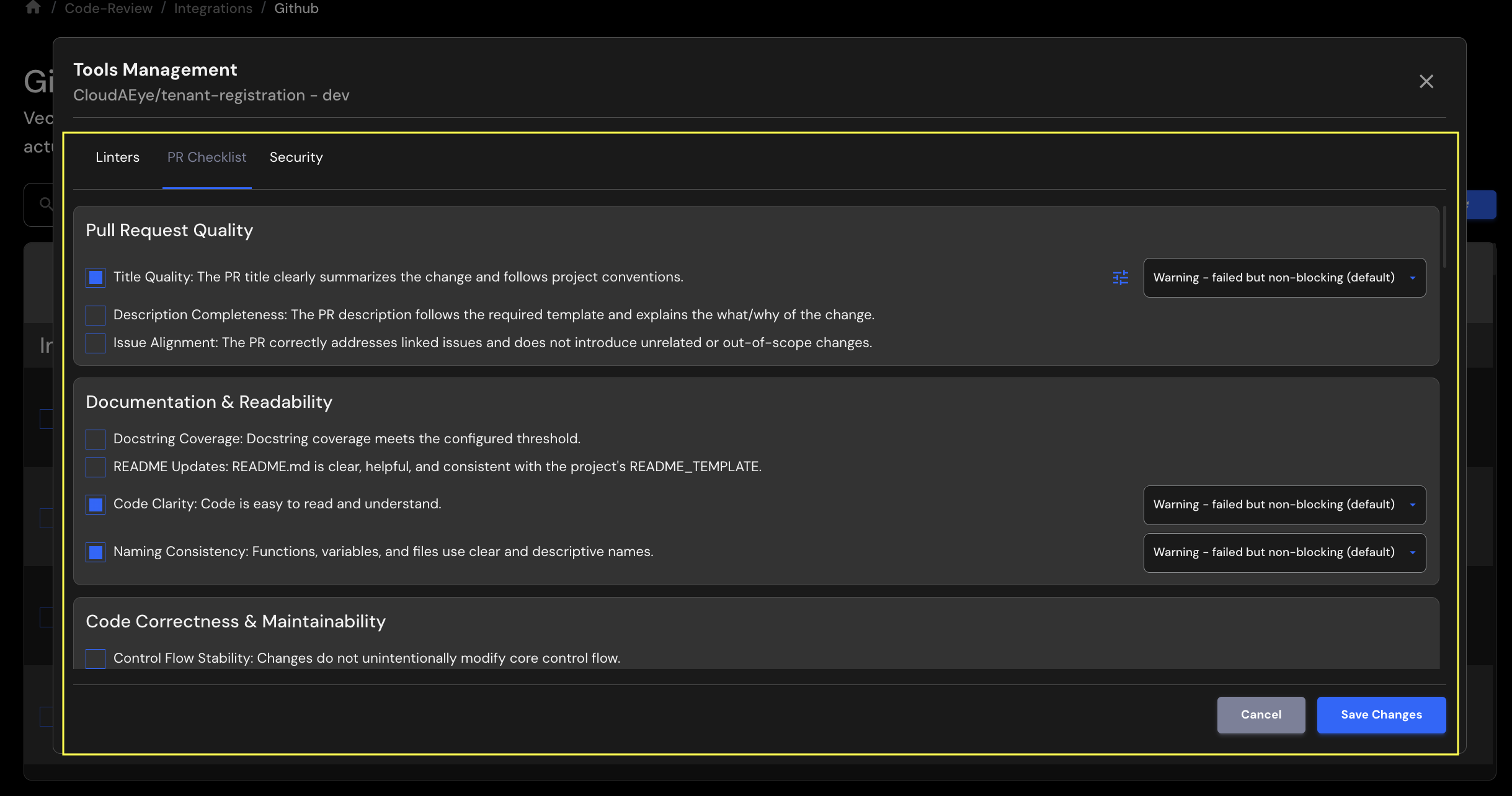

CloudAEye Pull Request (PR) Checklists operationalize this philosophy.

They transform code review from a subjective, reviewer-dependent activity into a repeatable, policy-driven enforcement mechanism that continuously shapes engineering culture and produces maintainable, secure, and future-ready codebases.

High-performing engineering organizations share several structural characteristics:

PR Checklists serve as the enforcement layer for these principles. Instead of relying on individual reviewers to remember “what good looks like,” CloudAEye makes expectations explicit, measurable, and enforceable at every pull request.

This is how engineering culture scales.

Elite engineering organizations did not arrive at scale through individual heroics. They achieved it by institutionalizing discipline. From early growth stages, code review was treated not as a courtesy or afterthought, but as a first-class engineering control, on par with testing, reliability, and security.

At scale, individual brilliance does not scale. Process does.

One defining characteristic of high-maturity engineering cultures is mandatory review for all changes, without exception. Every modification, no matter how small, must be reviewed and approved before merge. This establishes a foundational norm: quality is not negotiable, and standards apply universally.

More importantly, the review process is policy-driven, not reviewer-driven. Expectations around correctness, readability, testing, documentation, and security are well-defined and documented. Reviewers are not inventing standards on the fly; they are enforcing a shared, explicit bar.

This reduces subjectivity, minimizes friction, and ensures consistency across teams.

High-performing organizations optimize for readability over cleverness. Code is written for the next engineer, not the original author. Reviewers are encouraged to block changes that are technically correct but difficult to understand, poorly named, or insufficiently documented.

This philosophy produces long-term advantages:

Over time, engineers internalize these expectations and submit higher-quality changes before review even begins.

Disciplined engineering cultures strongly prefer small, focused pull requests. Large, monolithic changes are discouraged because they are harder to review, reason about, and validate.

This norm is reinforced both socially and procedurally through code review. Engineers quickly learn that smaller changes move faster, receive better feedback, and introduce less risk. The result is increased velocity without compromising quality, a balance many organizations struggle to achieve.

Mature organizations do not rely on human reviewers to catch mechanical or repetitive issues. Linting, static analysis, testing, and pre-merge checks run before human review begins.

This enables reviewers to focus on:

Automation encodes institutional knowledge. Humans apply judgment where it adds the most value.

In high-caliber environments, code review is also a primary vehicle for knowledge transfer and cultural reinforcement. Reviews are constructive, educational, and grounded in documented standards, not personal preference.

This creates alignment across teams and seniority levels. Junior engineers learn how the organization writes code through continuous feedback loops, while senior engineers ensure standards remain coherent as the organization grows.

The outcome of this model is not just high-quality code, it is organizational resilience. Engineering teams can:

Code review becomes more than a gate, it becomes a cultural enforcement mechanism. Engineering excellence is not aspirational. It is operationalized, measured, and continuously reinforced.

This is the philosophy policy-driven PR checklists enable. CloudAEye brings that discipline within reach for organizations of any size.

CloudAEye supports the broadest and deepest PR checklist coverage available today, spanning traditional software engineering, modern security, GenAI, agentic systems, and MCP servers.

Each checklist item is policy-driven, configurable, and enforceable as a merge gate.

CloudAEye ensures that every PR meets baseline quality standards before reviewers even engage.

This eliminates low-signal PRs and dramatically reduces review back-and-forth.

Readable code is maintainable code.

CloudAEye enforces:

These checks institutionalize documentation discipline—without relying on reviewer memory or goodwill.

CloudAEye goes beyond syntax and linting to evaluate semantic correctness.

Key controls include:

This category directly impacts long-term maintainability and refactor safety.

Testing is not optional at scale, it is policy.

CloudAEye enforces:

The result is predictable, regression-resistant software delivery.

Supply-chain risk is now a board-level concern.

CloudAEye enforces:

This embeds supply-chain hygiene directly into daily development workflows.

CloudAEye provides deep, policy-driven security coverage across:

These checks align with OWASP Top 10 and enterprise security expectations—without slowing developers down.

Traditional PR checklists stop at web security. CloudAEye does not.

This makes CloudAEye uniquely suited for teams building AI-native products.

CloudAEye natively supports OWASP LLM Top 10 controls, including:

As autonomous agents enter production, new failure modes emerge. CloudAEye supports OWASP Top 10 for Agentic Applications.

CloudAEye enforces controls for:

These policies protect both users and infrastructure in multi-agent systems.

For teams adopting Model Context Protocol (MCP), CloudAEye provides first-class checklist support.

This ensures MCP servers are production-grade, secure, and auditable from day one.

CloudAEye Pull Request Checklists are not just a review aid, they are a policy enforcement system for engineering excellence. By shifting expectations from informal guidance to explicit, automated controls, CloudAEye delivers measurable improvements across quality, security, velocity, and culture.

CloudAEye standardizes what “good” looks like by enforcing the same checklist policies across every repository and team. This eliminates variability caused by reviewer preferences, team silos, or uneven experience levels.

The result is a uniform quality bar, whether code is written by a senior engineer, a new hire, or an external contributor.

By automatically validating checklist items such as documentation coverage, test thresholds, linting, and security hygiene, CloudAEye removes low-value review work.

Reviewers can focus on:

This reduces review cycles, shortens lead time, and improves reviewer satisfaction.

CloudAEye transforms best practices into merge-time guarantees, not optional guidelines. Policies for testing, documentation, error handling, and security are evaluated consistently on every PR.

Over time, engineers internalize these expectations and write higher-quality code before opening a pull request, raising the baseline without additional process overhead.

CloudAEye embeds security directly into the development workflow instead of treating it as a downstream audit.

With native coverage for OWASP Web Top 10, LLM Top 10, agentic security, and MCP server risks, teams gain:

Security becomes preventative, not reactive.

Traditional code review tools stop at application logic. CloudAEye extends PR checklists to LLM-powered applications, autonomous agents, and MCP servers, enforcing safeguards against:

This makes CloudAEye uniquely suited for organizations building AI-native products.

Maintainable code costs less to operate.

By enforcing readability, documentation, test coverage, and duplicate-code elimination, CloudAEye reduces:

Engineering teams spend more time building and less time fixing.

New engineers learn your organization's standards through enforced policies, not tribal knowledge. PR feedback becomes consistent and predictable, accelerating onboarding and reducing dependency on individual mentors.

This is critical as teams grow and distribute globally.

Most organizations attempt to scale quality through more meetings, more checklists in docs, or more manual reviews. CloudAEye scales culture through automation.

Policies are defined once and applied everywhere. No additional approvals. No process inflation.

CloudAEye provides a clear audit trail showing which policies were evaluated, passed, or failed on every PR. This is invaluable for:

Quality and security are no longer subjective, they are measurable.

CloudAEye creates a shared contract between engineering, security, and platform teams. Expectations are codified, transparent, and enforced uniformly.

This alignment reduces friction, eliminates last-minute surprises, and enables teams to move faster together.

In summary, CloudAEye PR Checklists turn engineering intent into executable policy. They help organizations move fast without breaking quality, scale teams without diluting standards, and adopt modern architectures, including AI and agentic systems, with confidence.

The most important distinction: CloudAEye PR Checklists are not about micromanagement.

They are about codifying engineering values into enforceable policy, so that:

This is how organizations like Google operate, and how modern engineering teams should operate.

CloudAEye Pull Request Checklists transform code review into a strategic engineering control plane. By covering everything from PR hygiene and documentation to GenAI, agentic systems, and MCP security, CloudAEye delivers the most comprehensive checklist framework available today.

The result is simple and powerful:

This is how elite engineering teams build software, and how CloudAEye helps you do the same.

A seasoned engineering executive, Nazrul has been building enterprise products and services for 20 years. Nazrul is the founder and CEO of CloudAEye. Previously, he was Sr. Dir and Head of CloudBees Core where he focused on enterprise version of Jenkins. Before that, he was Sr. Dir of Engineering, Oracle Cloud. Nazrul graduated from the executive MBA program with high distinction (top 10% of the cohort) at University of Michigan Ross School of Business. Nazrul is named inventor in 47 patents.